Introduction to Kurobako: A Benchmark Tool for Hyperparameter Optimization Algorithms - Preferred Networks Research & Development

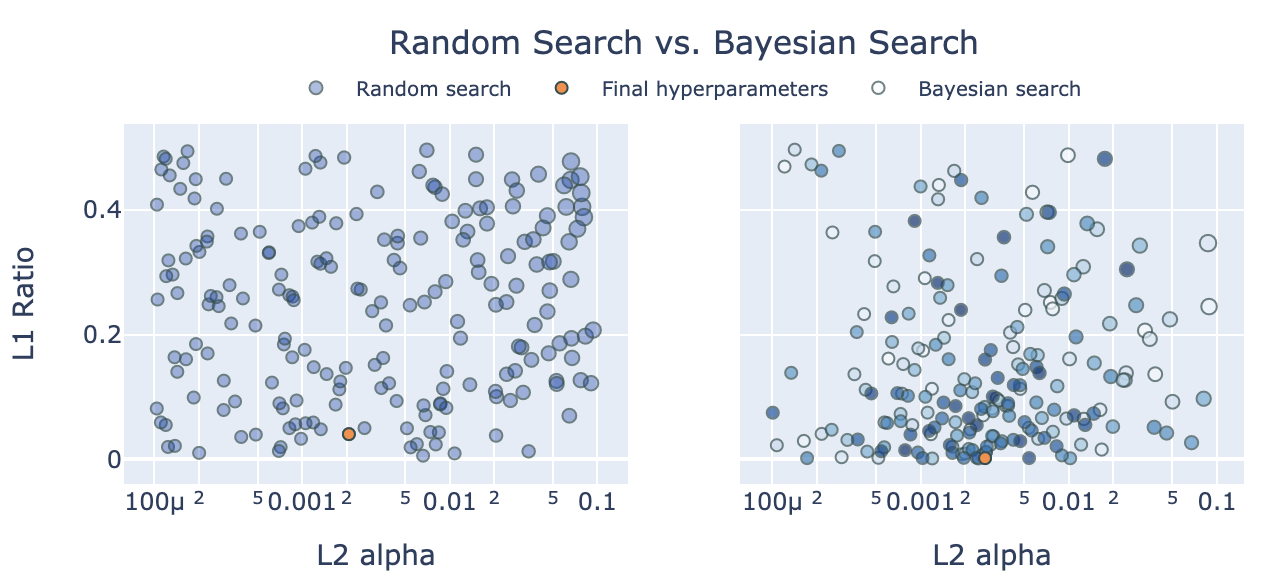

Beyond Grid Search: Using Hyperopt, Optuna, and Ray Tune to hypercharge hyperparameter tuning for XGBoost and LightGBM

Optimization starts from scratch after switching sampler from TPE to CMA-ES · Issue #1318 · optuna/optuna · GitHub

![Python ] Optuna Sampler 비교 (TPESampler VS SkoptSampler) Python ] Optuna Sampler 비교 (TPESampler VS SkoptSampler)](https://blog.kakaocdn.net/dn/99fMl/btqBuPO7Zgt/H3x7p66Z4f0iXfRBDKQStK/img.png)