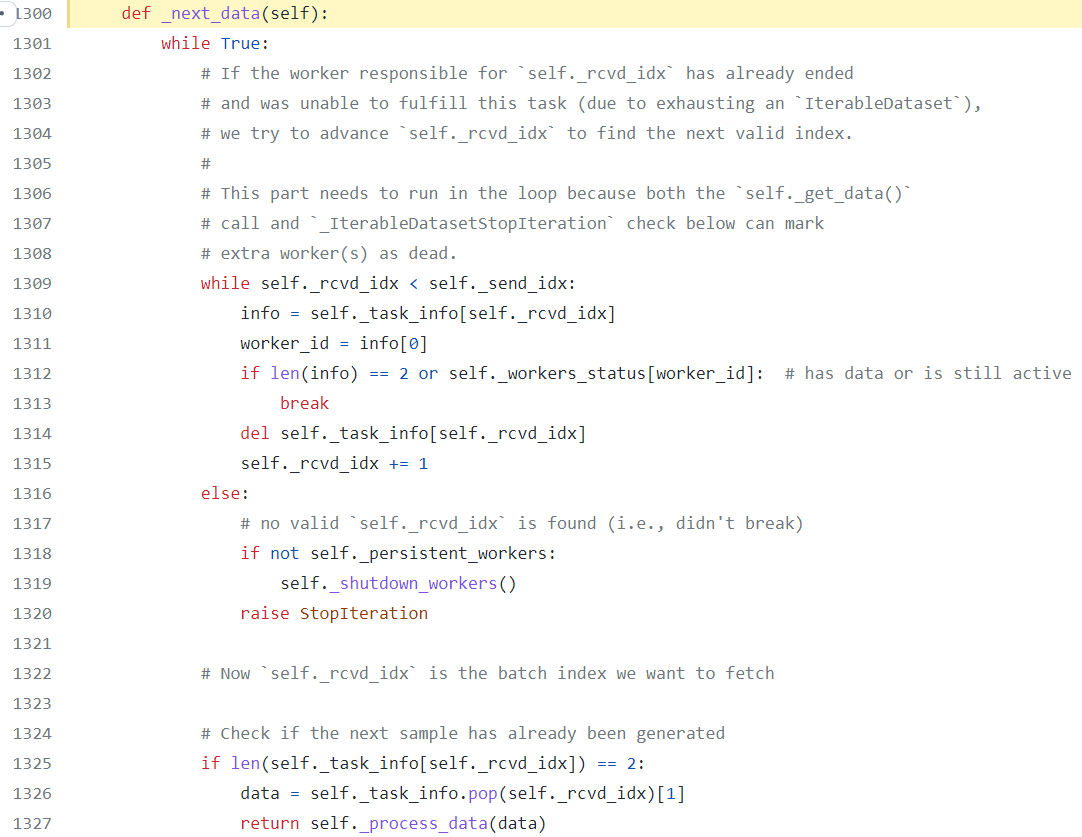

ChatGPT — Mastering Mini-Batch Training in PyTorch: A Comprehensive Guide to the DataLoader Class | by Sue | MLearning.ai | Medium

PyTorch BatchSampler still loads from Dataset one-by-one · Issue #5505 · huggingface/datasets · GitHub

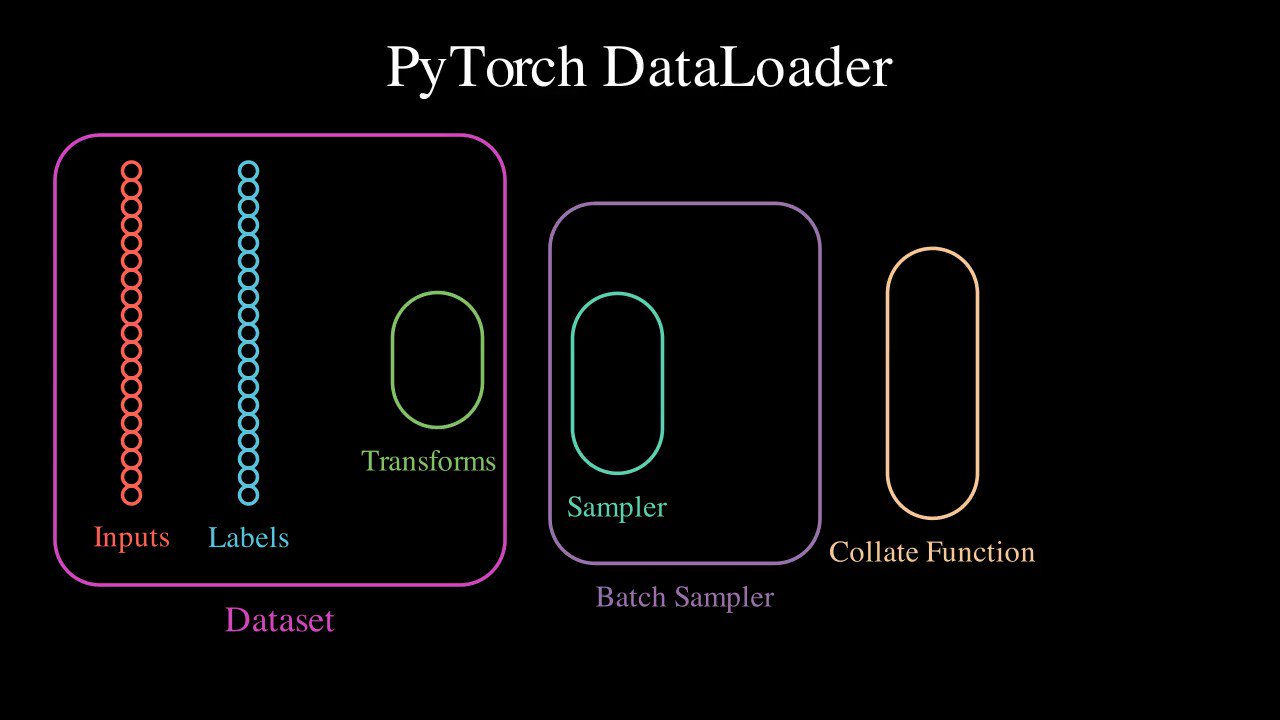

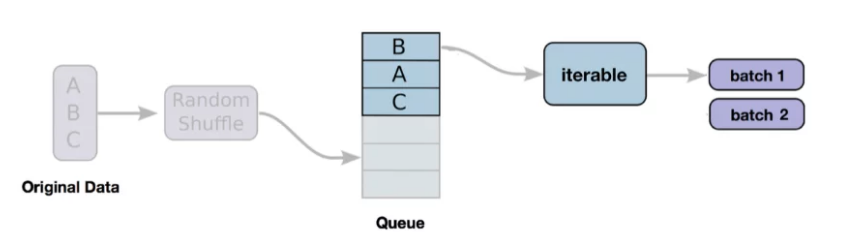

Scott Condron on X: "Here's an animation of a @PyTorch DataLoader. It turns your dataset into a shuffled, batched tensors iterator. (This is my first animation using @manim_community, the community fork of @

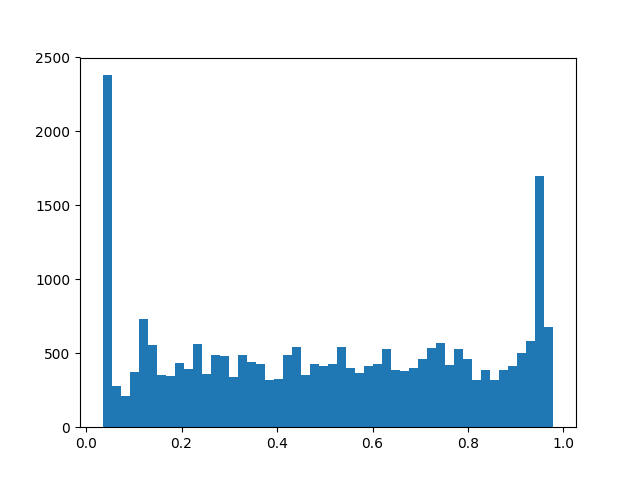

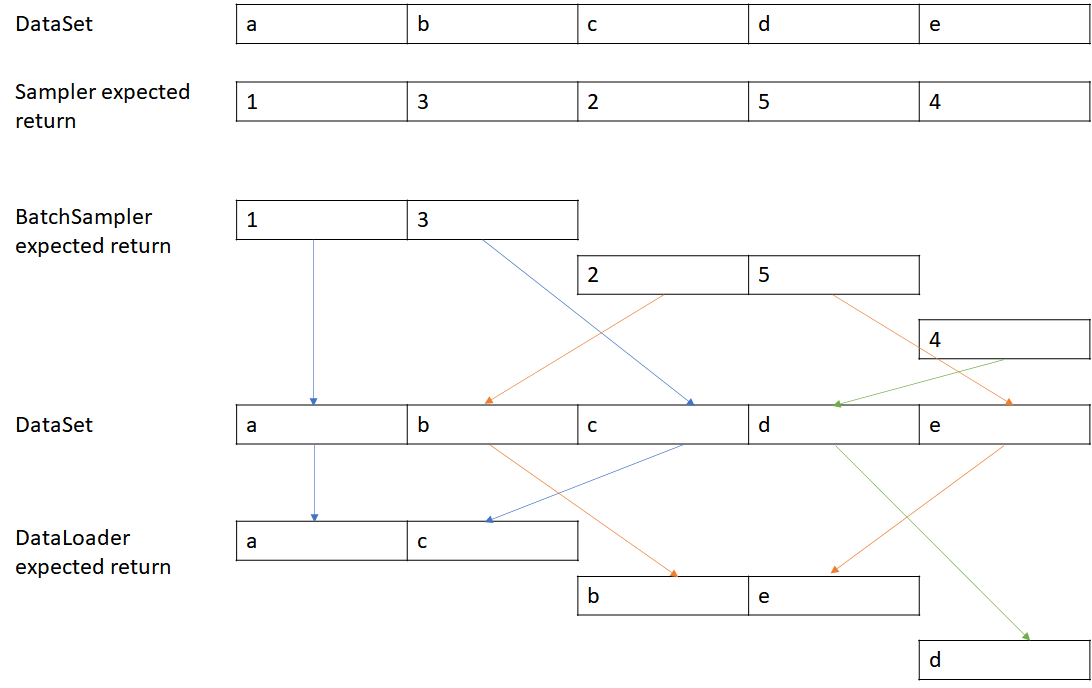

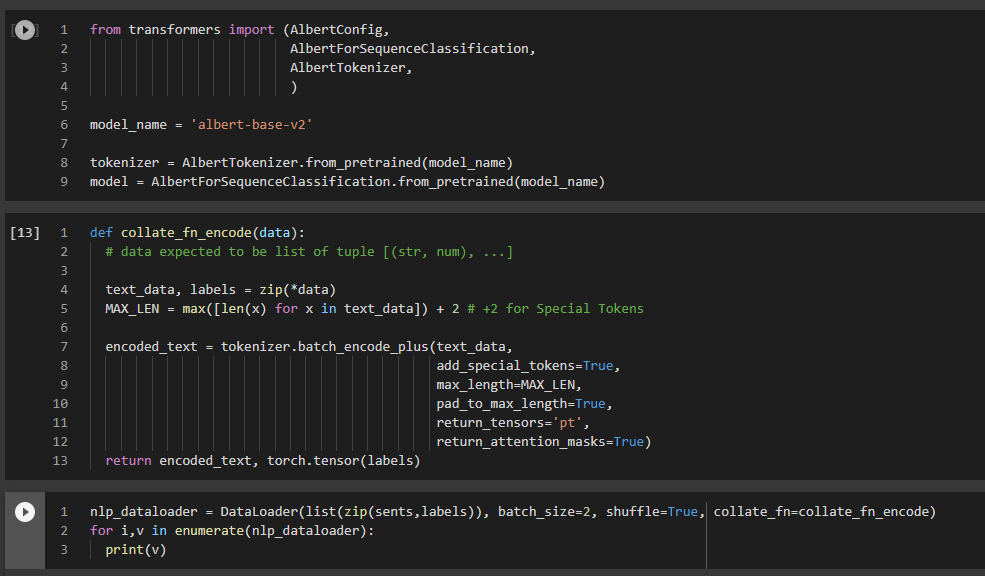

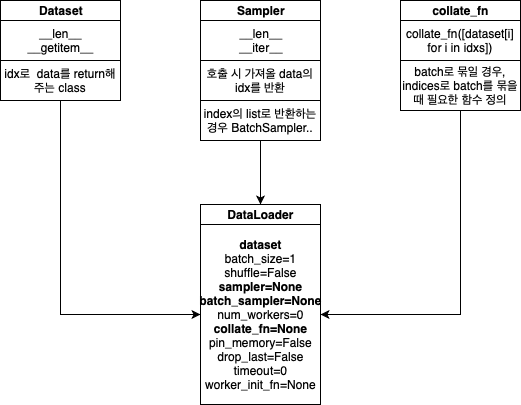

PyTorch Dataset, DataLoader, Sampler and the collate_fn | by Stephen Cow Chau | Geek Culture | Medium

How distributed training works in Pytorch: distributed data-parallel and mixed-precision training | AI Summer

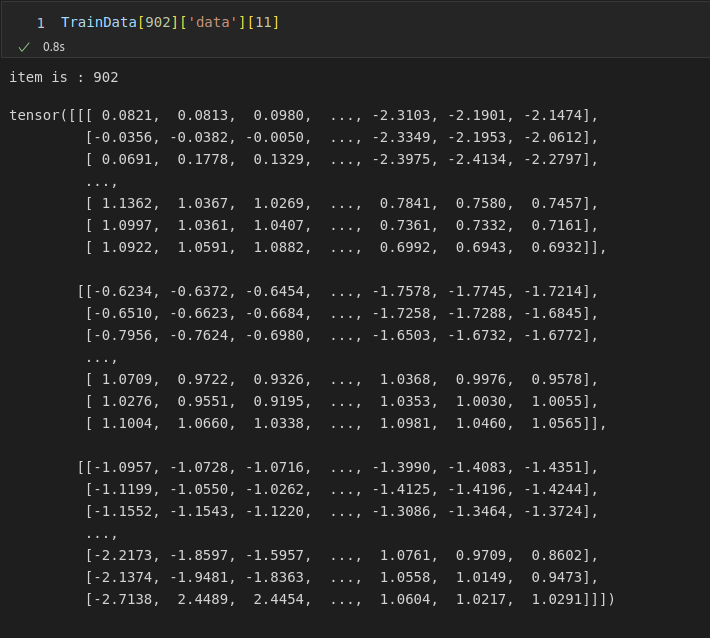

Silent failing of batch_sampler when the data points are lists of tensors. · Issue #32851 · pytorch/pytorch · GitHub

PyTroch dataloader at its own assigns a value to batch size of label (target), rather the initialized one - PyTorch Forums

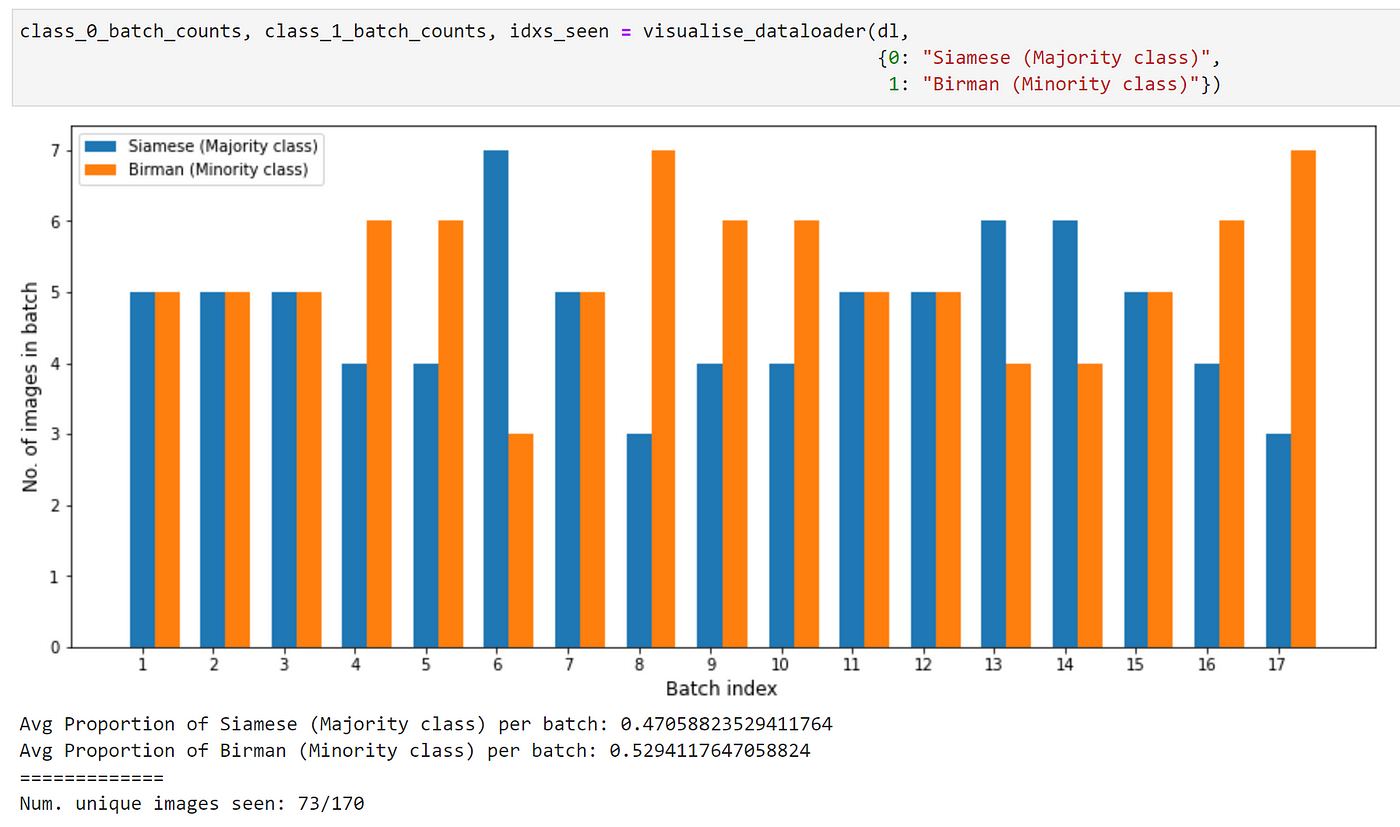

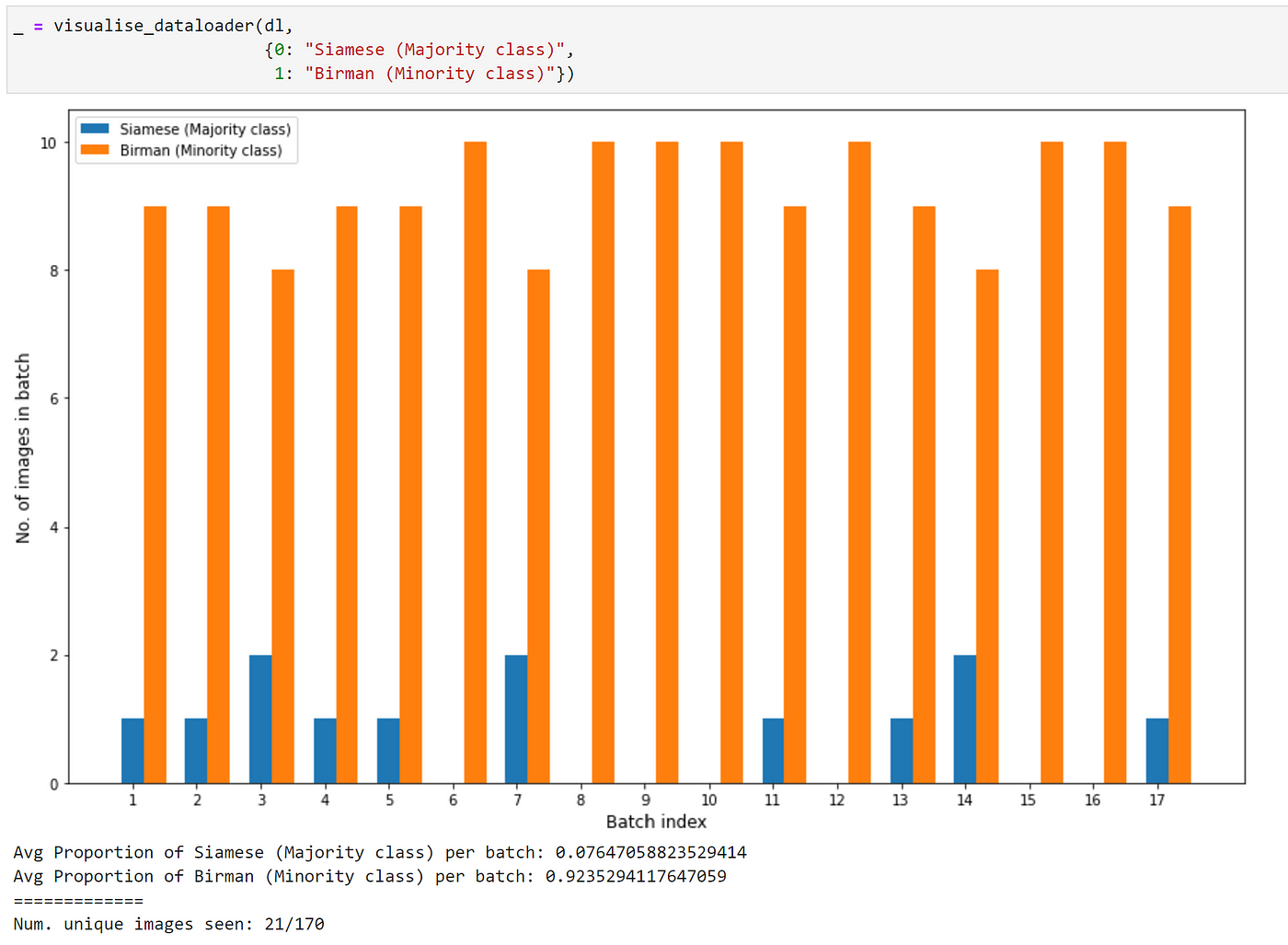

![PyTorch [Basics] — Sampling Samplers | by Akshaj Verma | Towards Data Science PyTorch [Basics] — Sampling Samplers | by Akshaj Verma | Towards Data Science](https://miro.medium.com/v2/resize:fit:1200/1*3Dsdw-L4qVhT1WkyLvtsPg.jpeg)

![Pytorch] Sampler, DataLoader和数据batch的形成- 知乎 Pytorch] Sampler, DataLoader和数据batch的形成- 知乎](https://picx.zhimg.com/v2-3da901255666b8290485fc7a41a57982_720w.jpg?source=172ae18b)